The lost art of checking your sources

Y'all, this is just getting embarrassing at this point.

While working on my latest paper (LLMs can be pruned if you’re using it for embedding!

I’m no stranger to citing blog posts and web pages. Several useful and prominent resources are sometimes just hosted on a random webpage, or some service like HuggingFace or Kaggle. No shame in citing DataCanary et al. when you need to. In this case, however, the Kaggle link

Currently, Quora uses a Random Forest model to identify duplicate questions. In this competition, Kagglers are challenged to tackle this natural language processing problem by applying advanced techniques to classify whether question pairs are duplicates or not.

Competitions come with their own datasets, and the licensing for that is set by each competition runner. Good news for this one: the license is clearly visible and provided for the data, as part of the competition rules. Here’s a key excerpt:

…Participants must use the Data solely for the purpose and duration of the Competition…

Full dataset license information

‘Data’ means the Data or Datasets linked from the Competition Website for the purpose of use by Participants in the Competition. For the avoidance of doubt, Data is deemed for the purpose of these Competition Rules to include any prototype or executable code provided to Participants by Kaggle or Competition Sponsor via the Website. Participants must use the Data only as permitted by these Competition Rules and any associated data use rules specified on the Competition Website.

Unless otherwise permitted by the terms of the Competition Website, Participants must use the Data solely for the purpose and duration of the Competition, including but not limited to reading and learning from the Data, analyzing the Data, modifying the Data and generally preparing your Submission and any underlying models and participating in forum discussions on the Website. Participants agree to use suitable measures to prevent persons who have not formally agreed to these Competition Rules from gaining access to the Data and agree not to transmit, duplicate, publish, redistribute or otherwise provide or make available the Data to any party not participating in the Competition. Participants agree to notify Kaggle immediately upon learning of any possible unauthorized transmission or unauthorized access of the Data and agree to work with Kaggle to rectify any unauthorized transmission. Participants agree that participation in the Competition shall not be construed as having or being granted a license (expressly, by implication, estoppel, or otherwise) under, or any right of ownership in, any of the Data.

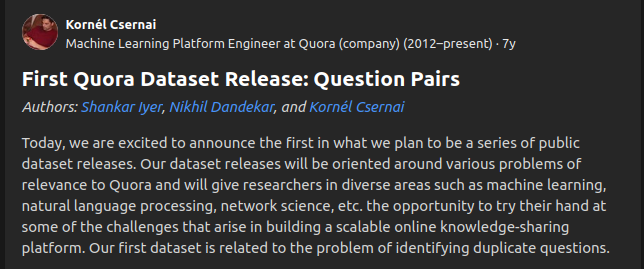

Oops. Quora Question Pairs turns out to not be licensed for research use! Except not, because while the Kaggle link is the first thing that comes up when you search it, the third one down is the dataset on the Quora website itself. Surprise! It has actual authors!

Specifically, Shankar Iyer, Nikhil Dandekar, and Kornél Csernai. So why exactly is renowned author hilfialkaff DataCanary cited instead?

Just to be clear, the Kaggle one isn’t a unique permutation of it or anything: it’s the exact same dataset.

Outside of being plain embarrassing, I think this is indicative of a pervasive attitude in NLP and AI research in general: a lack of care for dataset licensing and proper attribution. This is a particularly egregious example, and I’m genuinely surprised no one across these thirty-odd papers caught it (hilfialkaff DataCanary? Really?), but there are probably several similar misattributions that are sneakier in a lot of papers. As much as hunting for licenses is a pain in the ass, I think it’s a fairly good thing to expect papers to have a thorough licenses section (mine does it!). ACL seems to be moving towards that with their Responsible NLP checklist,